The satisficing vs optimizing debate

Last modified:

On this page I discuss issues that are connected to the long standing "Satisficing vs Optimizing" debate. For the benefit of readers who are not familiar with this debate I ought to point out that its main thesis is that satisficing has an advantage over optimizing. This discussion is related to my Info-Gap campaign.

Table of contents

Overview

I must admit that I find this debate tiresome. Nevertheless, I decided to join the fray so as to make the point that ... the debate is wasteful and counter-productive. After all, it is very easy to show that any "satisficing problem" can be formulated as an equivalent "optimization problem", hence at best the debate is about "style" rather than "substance".

But what is more, it seems that this entire discussion about the presumed superiority of "satisficing" over "optimizing" is conducted without serious attention being given to the fact that obtaining a "satificing" solution may not be sufficient. Obviously, this is so because a "satisficing" solution may turn out to be dominated — in the Pareto sense — by other "satisficing" solutions. In short, a solution that is better than your "satisficing" solution may well be available to you. So why shouldn't you be able to benefit from it?

My point is then that advocates of the superiority of "satisficing" over "optimizing" must in the first instance make a case for the proposition that it is a priori better to opt for a "good" solution even though we can obtain a "better" solution. Likewise, they must show us why we ought to be satisfied with a "better" solution if we can obtain the "best" solution!

For instance, are they suggesting that I settle for — that is be satisfied with — 4.25% p.a interest on my 3-month term deposit if I can get 4.75%?

Or, that I pay A$3.99 for a bar of dark chocolate if I can pay A$2.50?

Of course, it is a commonplace that obtaining the "best" solution is not always an easy task so that, obviously, cases will arise where we would have no other choice but to make do with a solution that is not as good as the "best" solution. But the point brought out by this trivial fact is not that we must therefore base our quest for solutions to problems confronting us on the "satisficing" premise. All that this fact brings out is that we ought to distinguish between two separate issues:

- The desirability of obtaining the "best" solution.

- The difficulties involved in obtaining the "best" solution.

I submit that a good deal of the "Satisficing vs Optimizing" debate is due to a simplistic treatment of this distinction.

But before we proceed to examine the technical aspects of this debate, let us remind ourselves of the basic question on the agenda.

Example

Consider the classical shortest path problem: you want to find the shortest path from node A to node B on a graph. Obviously, this is an optimization, namely a minimization, problem.

An intuitive satisficing counter-part of this problem could, for instance, be the following problem:

Find a path from node A to node B whose length is in the interval ΔT=[tL,tU]. Thus, if ΔT=[125,176], a solution to the satisficing problem is a path from node A to node B whose length is not smaller than 125 and not greater than 176.Clearly, in general the (optimal) solution to the optimization problem is not necessarily a (feasible) solution to the satisficing problem — and vice versa.

The question is then: in real-world situations, should we formulate our "path problem" as an optimization problem or as a satisficing problem?

The main point that I stress in this discussion is that the above "satisficing path problem" can be formulated as an optimization problem and that the recipe for this task is straightforward.

Therefore, the important thing is not whether we optimize or satisfice, but rather what do we optimize and what do we satisfice.

Info-Gap decision theory

My decision to enter the ring was prompted by the incessant misguided discussion on this topic in the Info-Gap literature, where it is repeatedly argued that optimal solutions are not robust. Apparently, the Info-Gap folks are unaware of the existence of the vibrant field of robust optimization.

But more than this, Info-gap proponents seem equally unaware of the simple fact that in cases where robustness is a factor, then robustness can/should be incorporated in the formulation of the optimization model to ensure that the optimal solutions generated by this model are robust. And if robustness is not a factor, then it will not be incorporated in the model full stop.

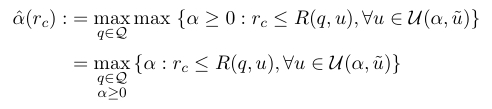

Before noting Info-Gap's position on this matter, I should point out that although in the 2006 edition of the Info-Gap book the robustness model is viewed as a "robust satisficing" model rather than a "robust optimizing" model — as it is viewed in the 2001 edition of the book — Info-Gap's generic robustness model describes a run of the mill ... optimization (maximization) problem:

So Info-Gap's thesis is that in the face of severe uncertainty it is "better" to maximize the robustness (alpha) of the performance constraint rc ≤ R(q,u) than to optimize the performance function R. Details on this model can be found elsewhere.

The fundamental Theorem

The point that I make here is that the "satisficing vs optimizing" issue is a ... non-issue. What is an issue, indeed an important one, is "what" should we seek to satisfice and "what" should we seek to optimize.

So what, then, is the "satisficing vs optimizing" debate all about?

For one thing, it is not about substance. It is about style, terminology, and buzzwords. Of course, for certain purposes style, terminology, and buzzwords are more important than substance. Hence, the debate.

To make sense of what is at issue here, let us consider the following two problems, where X is a given set and f is a real-valued function on X:

Satisficing Problem: Find an x in X that satisfies a given list of constraints. Optimization Problem: Find an x' in X that optimizes f(x) over X. Now consider this:

The Fundamental Theorem of the Satisficing vs Optimizing debate:

Any satisficing problem on Planet Earth can be formulated as an equivalent optimization problem so that any feasible solution to the satisficing problem is optimal with respect to the optimization problem, and vice versa.

A number of comments on this lovely theorem:

- I do not know who first proved this result. I for one have been using it frequently in my research and teaching since about 1973, and I know for a fact that it is being used extensively, for many years now, in the areas of Operations Research, Optimization, Computer Science, Statistics, etc.

- What it implies is that the important question is not whether we should satisfice or optimize. The important question is what should be satisfied and what should be optimized.

- It shows that off-the-cuff claims such as "satisficing is more robust than optimizing" and "it is better to satisfice that to optimize" are misguided.

- Yes, I am aware of Herbert Simon's work in this area.

- Yes, I am aware of Barry Schwartz's work on this topic; his popular The Paradox of Choice book, and I have even seen his road-show.

The proof of this theorem is so straightforward that I can set it out here and now. It runs as follows:

BOP.

Consider any arbitrary satisficing problem, namely consider any set X and any list of constraints on X. Now, let f denote the characteristic function of the feasible subset of X with respect to the constraints under consideration, namely letThen by inspection: f(x):= 1 if x is in X and it satisfies all the constraints; and f(x):= 0 otherwise

EOP.

- If x' is a feasible solution to the satisficing problem then x' maximizes f(x) over X.

- If x' in X maximizes f(x) over X then x' is a feasible solution to the satisficing problem.

For example, consider the following satisficing problem:

Find an element x' in a given set X such that g(x') > G and h(x') < H, where G and H are given numbers and g and h are given real-valued functions on X.All we have to do to rephrase this problem as an optimization problem is to let f denote the real-valued function on X defined as follows:

f(x):= 1 iff g(x) > G and h(x) < H ; f(x):=0 otherwise.The idea is then that the given satisficing problem is equivalent to the optimization problem max {f(x): x in X} in that an x' in X is a feasible solution to the satisficing problem iff x' is an optimal solution to the optimization problem max{f(x): x in X}.

Trivialities

I have plenty more to say on the Satisficing vs Optimizing debate. But I am afraid that this will have to wait till I complete a number of much more urgent tasks.

For now, you may wish to get hold of Jan Odhnoff's (1965) paper, whose last paragraph reads as follows:

It seems meaningless to draw more general conclusions from this study than those presented in section 2.2. Hence, that section maybe the conclusion of this paper. In my opinion there is room for both 'optimizing' and 'satisficing' models in business economics. Unfortunately, the difference between 'optimizing' and 'satisficing' is often referred to as a difference in the quality of a certain choice. It is a triviality that an optimal result in an optimization can be an unsatisfactory result in a satisficing model. The best things would therefore be to avoid a general use of these two words.Jan Odhnoff

On the Techniques of Optimizing and Satisficing

The Swedish Journal of Economics

Vol. 67, No. 1 (Mar., 1965)

pp. 24-39I fully sympathize with Odhnoff's frustration.

Indeed, as attested by the literature, it is remarkable to what length the "triviality" identified by Jan Odhnoff can be taken. If you are unfamiliar with the "satisficing vs optimizing" debate, use your favorite WWW search engine to look up catch phrases such as

"good is better than best","why more is less", "advantage of sub-optimal models" If you are surprised, perhaps amazed or even perplexed, to learn that a sub-optimal solution can be better than an optimal solution, or have an advantage over it, do not panic. Not yet, anyhow. Conserve your anti-panic resources, for you'll surely need them when you hear the really bad news.

The following simple practical example illustrates some of the non-issues that are advanced by proponents of the satisficing vs optimizing debate.

Example

You plan a visit to Paris with your spouse and have to decide what car to hire. There are 5 options, call them C1, C2, C3, C4, C5.

After long deliberations and consultations, you decide that the optimal choice is the small, funky, fuel-efficient C3.

On hearing about this choice, one of your friends points out that this choice, that is optimal for your problem, is not only sub optimal, but actually unsatisfactory, in the context of his — your friend's — problem, which is: what car to hire for a month long trans-Australia desert race.

You friend thus concludes: satisficing is better than optimizing!

One need hardly point out that in this context the triviality is so obvious and transparent that you'll immediately be able to see how absurd the argument is.

But all it takes to camouflage such a triviality is an abstraction, some mathematical notation, Greek symbols, and a couple of buzzwords. So let's see how it works.

The first thing you need to do is to create two different but slightly related abstract problems. Let us call them Problem A and Problem B and let sA and sB denote the respective optimal solutions.

So by construction, sA is optimal in the context of Problem A and sB is optimal in the context of Problem B. Therefore, typically sA is sub-optimal in the context of Problem B and sB is sub-optimal in the context of Problem A.

So far so good, but ... not very exciting.

So how about this exciting result: Very often there is a sub optimal solution, call it yA , that is superior to (better than) the optimal solution sA and there is a sub-optimal solution yB that is superior to (better than) the optimal solution sB.

Yes!!!!!!!!

Try to prove this formally on your own. I shall provide a formal proof after I return from my overseas trip in October.

The really bad news is that respectable professional journals publish this kind of material. Now you can panic, and rightly so.

The following naive example will show you how much mileage can be made of what Jan Odhnoff termed "trivialities".

Example

Let X and U be some sets, let R be a real-valued function on the cartesian product of these sets, and let u* be some given element of U. Consider now the following seemingly innocuous problem:

Problem A: max {R(x,u*): x in X}

In other words, the objective is to maximize R(x,u*) over x in X. Assume that this problem has feasible solutions and let xA denote an optimal solution to this problem. Thus, R(xA,u*) ≥ R(x,u*) for all x in X.

To be more concrete, consider the case where X is the real line, U=[0,1], u*=0.5 and

R(x,u) = 2ux - x2

In this case the optimal value of x is xA= 0.5. Note that in this context the feasible solution x0=0 is clearly sub-optimal.

So far so good.

Now, suppose that the survival of Planet Earth critically depends on the validity of the constraint R(x,u) ≥ 0, assuming that we control x and Nature controls u.

In this case, to save Planet Earth we consider this problem:

Problem B: Find an xB in X such that R(xB,u) ≥ 0, for all u in U.

Note that if, as above, X is the real line and U=[0,1], then this problem has only one feasible solution, namely xB = x0= 0.

In summary then: for the concrete instance where X is the real line, U=[0,1], and u*=0.5 we have:

- The optimal solution to Problem A, namely xA= 0.5, is not as good as the sub-optimal solution x0= 0, when they are compared in the context of Problem B. In fact, xA= 0.5 is not even feasible in the context of Problem B.

If you are a bit puzzled regarding the logic of this Example, join the queue.

Why on earth should we expect an optimal solution to Problem A to retain its superiority over other solutions in the context of Problem B ?!?!?!?!

Naturally, we would counter-argue as follows:

- The "best" solution to the satisficing problem, Problem B, namely xB = x0= 0, is sub-optimal in the context of the optimization problem, Problem A !??!?!?!?!?!!?!?!?!?!?!?!??!!?

So what?!

It is sad, very sad, that such convoluted, misguided, arguments are used to "show" that satisficing is better than optimizing.

What a mess!

The following short quote vividly illustrates how pointless the "satisficing vs optimizing" debate is (emphasis is mine):

At some point in his deliberations a decision-maker finds he is seeking an optimal value (or perhaps a set of optima under various conditions). His aide, the operational research worker, points out that the cost of finding the optimum is high. The decision-maker takes this new piece of information into consideration and as part of his own decision says "All right. I will be satisfied with something within 5 per cent of optimum." The OR man may then be able to develop a less costly technique. For example, if branch-and-bound is to be used, he may modify it to accept an improvement over a current solution only if it is better by the necessary margin. Whether we now say that the decision-maker is an optimizer or satisficer is, I suggest, a ticklish point which we should not worry too much about.

M. Benham

Reply to a comment by C.B. Chapman

Operational Research Quarterly 24(2), p. 311, 1973I have plenty more to say about such general "trivialities".

If you are truly eager to know more about what I have to say, feel free to contact me.

Next.

Bounded rationality

The case against optimization is often based on references to Simon's theory of bounded rationality. It is therefore important to note that this theory does not categorically assert that it is better to satisfice than optimize. I find the following quote informative (the color and oversized font is mine):

There are many excellent treatments of bounded rationality (see, e.g., Simon (1982a, 1982b, 1997) and Rubinstein (1998)). Appendix A provides a brief survey of the mainstream of bounded rationality research. This research represents an important advance in the theory of decision making; its importance is likely to increase as the scope of decision-making grows. However, the research has a common theme, namely, that if a decision maker could optimize, it surely should do so. Only the real-world constraints on its capabilities prevent it from achieving the optimum. By necessity, it is forced to compromise, but the notion of optimality remains intact. Bounded rationality is thus an approximation to substantive rationality, and remains as faithful as possible to the fundamental premises of that view.

Wynn C. Stirling (2003, p. 10)

Satisficing Games and Decision Making

Cambridge University PressSo when I am in a good mood I argue as follows:

If you can optimize, then you surely should do so. If you can't, then do the best you can. But never ever use the "bounded rationality" argument as an excuse for a simplistic, quick-and-dirty "satisficing job".

I cannot tell you here and now what I argue when I am in a bad mood. But, we can discuss this over a cup of coffee (skinny latte, no sugar, please).

Talking about optimization.

It is amazing what kind of misconceptions some analysts have about optimization, its role in decision making and management, its limitations and its relation to other methodologies.

For instance, read this (color is mine):

From Optimization to Adaptation:

Shifting Paradigms in Environmental Management and Their Application to Remedial Decisions.

ABSTRACT

Current uncertainties in our understanding of ecosystems require shifting from optimization-based management to an adaptive management paradigm. Risk managers routinely make suboptimal decisions because they are forced to predict environmental response to different management policies in the face of complex environmental challenges, changing environmental conditions, and even changing social priorities. Rather than force risk managers to make single suboptimal management choices, adaptive management explicitly acknowledges the uncertainties at the time of the decision, providing mechanisms to design and institute a set of more flexible alternatives that can be monitored to gain information and reduce the uncertainties associated with future management decisions. Although adaptive management concepts were introduced more than 20 y ago, their implementation has often been limited or piecemeal, especially in remedial decision making. We believe that viable tools exist for using adaptive management more fully. In this commentary, we propose that an adaptive management approach combined with multicriteria decision analysis techniques would result in a more efficient management decision-making process as well as more effective environmental management strategies. A preliminary framework combining the 2 concepts is proposed for future testing and discussion.

Igor Linkov, F Kyle Satterstrom, Gregory A Kiker, Todd S Bridges, Sally L Benjamin, David A Belluck

Integrated Environmental Assessment and Management

Volume 2, Number 1, pp. 92-98, 2006Are we to understand from this that "optimization" cannot deal with uncertainty?! Are we to conclude that "optimization" is not adaptive? And what about multicriteria decision analysis techniques: don't they offer, among other things, something called Pareto Optimization?

I shall address this quote, and the article itself, in due course. Let me just point out here and now that "optimization-based management" and "adaptive management" are not mutually exclusive. That is, there is no reason why "optimization-based management" cannot be adaptive and why "adaptive management" cannot not be "optimization-based".

When I read abstracts like this I do not know whether I should laugh or cry. But seriously, this is not funny, not funny at all.

Pareto Efficiency

The term Pareto optimization refers to the area of optimization that concerns itself with the modeling, analysis and solution of problems that require the elimination of dominated solutions from the decision space of the problem considered. The idea is attributed to Vilfredo Pareto (1848-1923), an Italian economist and sociologist. He was a mathematician/physicist by training, and started his professional career as an engineer.

In plain words, a Pareto solution to a decision problem has the property that it cannot be improved with respect to any criterion without its performance worsening with regard to some other criterion.

The English translation (from French) of the original phrasing of the idea is as follows:

We will say that the members of a collectivity enjoy maximium ophelimity in a certain position when it is impossible to find a way of moving from that position very slightly in such a manner that the ophelimity enjoyed by each of the individuals of that collectivity increases or decreases. That is to say, any small displacement in departing from that position necessarily has the effect of increasing the ophelimity which certain individuals enjoy, and decreasing that which others enjoy, of being agreeable to some, and disagreeable to others.

Vilfredo Pareto

Mannual of Political Economy (1906, p. 261)

(Just in case: ophelimity = economic satisfaction)In WIKIPEDIA, Pareto Efficiency, or rather Inefficiency, is described as follows:

An economic system that is Pareto inefficient implies that a certain change in allocation of goods (for example) may result in some individuals being made "better off" with no individual being made worse off, and therefore can be made more Pareto efficient through a Pareto improvement. Here 'better off' is often interpreted as "put in a preferred position." It is commonly accepted that outcomes that are not Pareto efficient are to be avoided, and therefore Pareto efficiency is an important criterion for evaluating economic systems and public policies.In other words, a solution to a problem is Pareto Efficient if it is not "dominated" by other solutions to the problem.

For example, if you like — and I mean LIKE — both mangoes and dark chocolate, then a solution yielding 4 mangoes and 5 dark chocolate bars is dominated by a solution yielding 4 mangoes and 6 dark chocolate bars.

The point I want to make via this example is that you may run into great trouble should you propose a solution that is (Pareto) dominated by another solution. In fact, you may even risk losing your job!

The bottom line is that often it is not good enough to generate a feasible solution, even if this solution satisfies some pre-determined basic requirements.

This is so because normally, we (individuals, organizations etc.) seek "good" solutions to the problems confronting us. So, should it transpire that the solution we propose for a particular problem is not as good as another solution that is equally available to us, the implication would be very clear. We would be deemed incompetent, indeed derelict, in our duty, especially if the "satificing" solution that we propose is to be adopted by others who are dependent on our proposal and/or pay for it.

This project is related to some of my other campaigns, namely the Worst-Case Analysis / Maximin Campaign, Robust Decision-Making Campaign, Responsible Decision-Making Campaign and the Info-Gap Campaign.

The Black Swan

Only time will tell what impact (if any) Nassim Taleb's recent popular and controversial book The Black Swan: The Impact of the Highly Improbable will have on the field of decision-making under severe uncertainty.

I, for one, hope that the issues raised in this book and in its predecessor, Fooled by Randomness: The Hidden Role of Chance in the Markets and in Life, will be instrumental in helping decision-makers to identify voodoo decision theories -- such as Info-Gap decision theory -- that promise robust decisions under severe uncertainty.

I fear though -- in view of my experience of the past 40 years - that the danger is that the huge success of the Black Swan will inspire a new wave of voodoo decision theories, purportedly capable of ... "domesticating" black swans and preempting the discovery of ... purple swans!

We shall have to wait and see.

For those who have "been in hiding" I should note that Taleb has become quite a celebrity. According to the Prudent Investor Newsletters (Tuesday, June 3, 2008):

- Mr. Taleb charges about $60,000 per speaking engagement and does about 30 presentations a year to "to bankers, economists, traders, even to Nasa, the US Fire Administration and the Department of Homeland Security" according to Timesonline’s Bryan Appleyard.

- He recently got $4million as advance payment for his next much awaited book.

- Earned $35-$40 MILLION on a huge Black Swan event-on the biggest stockmarket crash in modern history-Black Monday, October 19,1987.

So, if you haven’t heard him in person you can easily find on the WWW numerous videos of his interviews.

Here is a link to a very short (2:45 min) clip, recorded by Taleb himself, apparently at Heathrow Airport, of 10 tips on how to deal with Black Swans, and life in general.

- Scepticism is effortful and costly. It is better to be sceptical about matters of large consequences, and be imperfect, foolish and human in the small and the aesthetic.

- Go to parties. You can't even start to know what you may find on the envelope of serendipity. If you suffer from agoraphobia, send colleagues.

- It's not a good idea to take a forecast from someone wearing a tie. If possible, tease people who take themselves and their knowledge too seriously.

- Wear your best for your execution and stand dignified. Your last recourse against randomness is how you act -- if you can't control outcomes, you can control the elegance of your behaviour. You will always have the last word.

- Don't disturb complicated systems that have been around for a very long time. We don't understand their logic. Don't pollute the planet. Leave it the way we found it, regardless of scientific 'evidence'.

- Learn to fail with pride -- and do so fast and cleanly. Maximise trial and error -- by mastering the error part.

- Avoid losers. If you hear someone use the words 'impossible', 'never', 'too difficult' too often, drop him or her from your social network. Never take 'no' for an answer (conversely, take most 'yeses' as 'most probably').

- Don't read newspapers for the news (just for the gossip and, of course, profiles of authors). The best filter to know if the news matters is if you hear it in cafes, restaurants ... or (again) parties.

- Hard work will get you a professorship or a BMW. You need both work and luck for a Booker, a Nobel or a private jet.

- Answer e-mails from junior people before more senior ones. Junior people have further to go and tend to remember who slighted them.

It is interesting to juxtapose Prof. Taleb’s thesis in The Black Swan that severe uncertainty makes (reliable) prediction in the Socio/economic/political spheres impossible, with the polar position taken by his colleague, Prof. Bruce Bueno de Mesquita, who actually specializes in predicting the future.

One thing for sure: Sooner or later info-gap scholars will find a simple reliable recipe for handling Black Swans!

Stay tuned!

And what do you know ?????? See Review 17

I was bound to happen!!

New Nostradamuses

Not only professionals specializing in "decision under uncertainty", but also the proverbial "man in the street", take it for granted that the ability to accurately predict future events is one of the most onerous challenges facing humankind — especially persons in authority, persons responsible for the management of business or economic organizations etc.

A notable exception to this rule is the New _Nostradamus" : Prof. Bruce Bueno de Mesquita, a political science professor at New York University and Senior Fellow at the Hoover Institution.

Who, according to Good Magazine, specializes in predicting future events — at least in the area of international conflicts.

The claim is that this distinguished political scientist can actually predict the outcome of any international conflcit!

To do this Prof. Bueno de Mesquita does not use a Crystal Ball, but a thoroughly scientific method which he claims, is based in a branch of applied mathematics called Game Theory.

According to GoodReads.com,

" ... Bruce Bueno de Mesquita is a political scientist, professor at New York University, and senior fellow at the Hoover Institution. He specializes in international relations, foreign policy, and nation building. He is also one of the authors of the selectorate theory.

He has founded a company, Mesquita & Roundell, that specializes in making political and foreign-policy forecasts using a computer model based on game theory and rational choice theory. He is also the director of New York University's Alexander Hamilton Center for Political Economy.

He was featured as the primary subject in the documentary on the History Channel in December 2008. The show, titled Next Nostradamus, details how the scientist is using computer algorithms to predict future world events ..."

Here is an interview with Prof. Bueno de Mesquita (with Riz Khan - The art and science of prediction - 09 Jan 08):

And here is a 20-minute lecture on the ... future of Iran (TED, February 2009):

Apparently, all you need to accomplish this is a computer, expert-knowledge on Iran, and game theory!

Some of the predictions attributed to Prof. Bueno de Mesquita are:

- The second Palestinian Intifada and the death of the Mideast peace process, two years before this came to pass.

- The succession of the Russian leader Leonid Brezhnev by Yuri Andropov, who at the time was not even considered a contender.

- The voting out of office of Daniel Ortega and the Sandanistas in Nicaragua, two years before this happened.

- The harsh crack down on dissidents by China's hardliners four months before the Tiananmen Square incident.

- France's hairs-breadth passage of the European Union's Maastricht Treaty.

- The exact implementation of the 1998 Good Friday Agreement between Britain and the IRA.

- China's reclaiming of Hong Kong and the exact manner the handover would take place, 12 years before it happened.

Impressive, isn't it!

As might be expected, these and similar claims by Prof. Bueno de Mesquita have sparked a vigorous debate not only in the professional journals but also on the WWW. Interested readers can consult this material to see for themselves, whether Bueno de Mesquita's claims attest to a major scientific breakthrough or ... voodoo mathematics.

Also, in addition to consulting this material you may want to have a look at a short video clip by Matt Brawn (right) which, he compiled in response to a short note entitled This man can actually predict the future!.

Of particular interest is, of course, the "success" rate of the Prof. Bueno de Mesquita's predictions: over 90% — yes over ninty percent!

Here is Trevor Black's common sense reaction to this claim:

I am a little skeptical about anyone who claims to have a 90% success rate. I just don't buy it. Especially when they say that they can explain away a lot of the other 10%.If you come to me and tell me you have a model that gets it right 60% or 70% of the time, I may listen. Skeptically, but I will listen. 90% and I start to smell something.

All I wish to add here is that Prof. Bueno de Mesquita (left) makes his predictions under conditions of "severe uncertainty" which of course render them hugely vulnerable to what Prof. Naseem Taleb (right) dubs the Black Swan phenomenon.

|

Hence, the very proposition that such predictions can be made at all, let alone be reliable, is diametrically opposed to Nassim Taleb's categorical rejection of any such position. For his thesis is that Black Swans are totally outside the purview of mathematical treatment, especially by models that are based on expected utility theory and rational choice theory. Interesting, though, this is precisely the stuff that Prof. Bueno de Mesquita's method is made of: expected utility theory and rational choice theory! Even more interesting is the fact that Nassim Taleb (right) and Bueno de Mesquita (left) are staff members of the same academic institution, namely New York University. So, all that's left to say is: Go figure! |

|

As indicated above, the debate over Bueno de Mesquita's theories is not new. It has been ongoing, in the relevant academic literature, at least since the publication of his book The War Trap (1981).

For an idea of the kind of criticism sparked by his work, take a look at the quotes I provide from articles that are critical of Bueno de Mesquita theories.

Of course, there are other New Nostradamuses around.

According to the Associated Press, the latest (2009, Mar 4, 4:39 AM EST) news from Russia about the future of the USA is that

" ... President Barack Obama will order martial law this year, the U.S. will split into six rump-states before 2011, and Russia and China will become the backbones of a new world order ..."Apparently this prediction was made by Igor Panarin (right), Dean of the Russian Foreign Ministry diplomatic academy and a regular on Russia's state-controlled TV channels (see full AP news report).

Regarding the future of Russia,

"You don't sound too hopeful".

"Hopeful? Please, I am Russian. I live in a land of mad hopes, long queues, lies and humiliations. They say about Russia we never had a happy present, only a cruel past and a quite amazing future ..."Malcolm Bradbury

To the Hermitage (2000, p. 347)We should therefore be reminded of J K Galbraith's (1908-2006) poignant observation:

There are two classes of forecasters: those who don't know and those who don't know they don't know.

And in the same vein,

The future is just what we invent in the present to put an order over the past.

Malcolm Bradbury

Doctor Criminale (1992, p. 328)So, we shall have to wait and see.

And how about this more recent piece by Heath Gilmore and Brian Robins in the Sydney Morning Herald (March 27, 2009):

"... COUPLES wondering if the love will last could find out if theirs is a match made in heaven by subjecting themselves to a mathematical test.

A professor at Oxford University and his team have perfected a model whereby they can calculate whether the relationship will succeed.

In a study of 700 couples, Professor James Murray, a maths expert, predicted the divorce rate with 94 per cent accuracy.

His calculations were based on 15-minute conversations between couples who were asked to sit opposite each other in a room on their own and talk about a contentious issue, such as money, sex or relations with their in-laws.

Professor Murray and his colleagues recorded the conversations and awarded each husband and wife positive or negative points depending on what was said. ..."

Such interviews should perhaps be made mandatory for all couples registering their marriage.

More details on the mathematics of marriage can be found in The Mathematics of Marriage: Dynamic Nonlinear Models by J.M. Gottman, J.D. Murray, C. Swanson, R. Tyson, and K.R. Swanson (MIT Press, Cambridge, MA, 2002.)

On a more positive note, though, here is an online Oracle from Melbourne (Australia: the land of the real Black Swan!).

You may wish to consult this friendly 24/7 facility about important "Yes/No" questions that you no doubt have about the future.

More on this and related topics can be found in the pages of the Worst-Case Analysis / Maximin Campaign, Severe Uncertainty, and the Info-Gap Campaign.

Recent Articles, Working Papers, Notes

Also, see my complete list of articles

Moshe's new book! - Sniedovich, M. (2012) Fooled by local robustness, Risk Analysis, in press.

- Sniedovich, M. (2012) Black swans, new Nostradamuses, voodoo decision theories and the science of decision-making in the face of severe uncertainty, International Transactions in Operational Research, in press.

- Sniedovich, M. (2011) A classic decision theoretic perspective on worst-case analysis, Applications of Mathematics, 56(5), 499-509.

- Sniedovich, M. (2011) Dynamic programming: introductory concepts, in Wiley Encyclopedia of Operations Research and Management Science (EORMS), Wiley.

- Caserta, M., Voss, S., Sniedovich, M. (2011) Applying the corridor method to a blocks relocation problem, OR Spectrum, 33(4), 815-929, 2011.

- Sniedovich, M. (2011) Dynamic Programming: Foundations and Principles, Second Edition, Taylor & Francis.

- Sniedovich, M. (2010) A bird's view of Info-Gap decision theory, Journal of Risk Finance, 11(3), 268-283.

- Sniedovich M. (2009) Modeling of robustness against severe uncertainty, pp. 33- 42, Proceedings of the 10th International Symposium on Operational Research, SOR'09, Nova Gorica, Slovenia, September 23-25, 2009.

- Sniedovich M. (2009) A Critique of Info-Gap Robustness Model. In: Martorell et al. (eds), Safety, Reliability and Risk Analysis: Theory, Methods and Applications, pp. 2071-2079, Taylor and Francis Group, London.

.

- Sniedovich M. (2009) A Classical Decision Theoretic Perspective on Worst-Case Analysis, Working Paper No. MS-03-09, Department of Mathematics and Statistics, The University of Melbourne.(PDF File)

- Caserta, M., Voss, S., Sniedovich, M. (2008) The corridor method - A general solution concept with application to the blocks relocation problem. In: A. Bruzzone, F. Longo, Y. Merkuriev, G. Mirabelli and M.A. Piera (eds.), 11th International Workshop on Harbour, Maritime and Multimodal Logistics Modeling and Simulation, DIPTEM, Genova, 89-94.

- Sniedovich, M. (2008) FAQS about Info-Gap Decision Theory, Working Paper No. MS-12-08, Department of Mathematics and Statistics, The University of Melbourne, (PDF File)

- Sniedovich, M. (2008) A Call for the Reassessment of the Use and Promotion of Info-Gap Decision Theory in Australia (PDF File)

- Sniedovich, M. (2008) Info-Gap decision theory and the small applied world of environmental decision-making, Working Paper No. MS-11-08

This is a response to comments made by Mark Burgman on my criticism of Info-Gap (PDF file)

- Sniedovich, M. (2008) A call for the reassessment of Info-Gap decision theory, Decision Point, 24, 10.

- Sniedovich, M. (2008) From Shakespeare to Wald: modeling wors-case analysis in the face of severe uncertainty, Decision Point, 22, 8-9.

- Sniedovich, M. (2008) Wald's Maximin model: a treasure in disguise!, Journal of Risk Finance, 9(3), 287-291.

- Sniedovich, M. (2008) Anatomy of a Misguided Maximin formulation of Info-Gap's Robustness Model (PDF File)

In this paper I explain, again, the misconceptions that Info-Gap proponents seem to have regarding the relationship between Info-Gap's robustness model and Wald's Maximin model.

- Sniedovich. M. (2008) The Mighty Maximin! (PDF File)

This paper is dedicated to the modeling aspects of Maximin and robust optimization.

- Sniedovich, M. (2007) The art and science of modeling decision-making under severe uncertainty, Decision Making in Manufacturing and Services, 1-2, 111-136. (PDF File)

.

- Sniedovich, M. (2007) Crystal-Clear Answers to Two FAQs about Info-Gap (PDF File)

In this paper I examine the two fundamental flaws in Info-Gap decision theory, and the flawed attempts to shrug off my criticism of Info-Gap decision theory.

- My reply (PDF File)

to Ben-Haim's response to one of my papers. (April 22, 2007)

This is an exciting development!

- Ben-Haim's response confirms my assessment of Info-Gap. It is clear that Info-Gap is fundamentally flawed and therefore unsuitable for decision-making under severe uncertainty.

- Ben-Haim is not familiar with the fundamental concept point estimate. He does not realize that a function can be a point estimate of another function.

So when you read my papers make sure that you do not misinterpret the notion point estimate. The phrase "A is a point estimate of B" simply means that A is an element of the same topological space that B belongs to. Thus, if B is say a probability density function and A is a point estimate of B, then A is a probability density function belonging to the same (assumed) set (family) of probability density functions.

Ben-Haim mistakenly assumes that a point estimate is a point in a Euclidean space and therefore a point estimate cannot be say a function. This is incredible!

- A formal proof that Info-Gap is Wald's Maximin Principle in disguise. (December 31, 2006)

This is a very short article entitled Eureka! Info-Gap is Worst Case (maximin) in Disguise! (PDF File)

It shows that Info-Gap is not a new theory but rather a simple instance of Wald's famous Maximin Principle dating back to 1945, which in turn goes back to von Neumann's work on Maximin problems in the context of Game Theory (1928).

- A proof that Info-Gap's uncertainty model is fundamentally flawed. (December 31, 2006)

This is a very short article entitled The Fundamental Flaw in Info-Gap's Uncertainty Model (PDF File).

It shows that because Info-Gap deploys a single point estimate under severe uncertainty, there is no reason to believe that the solutions it generates are likely to be robust.

- A math-free explanation of the flaw in Info-Gap. ( December 31, 2006)

This is a very short article entitled The GAP in Info-Gap (PDF File).

It is a math-free version of the paper above. Read it if you are allergic to math.

- A long essay entitled What's Wrong with Info-Gap? An Operations Research Perspective (PDF File)

(December 31, 2006).

This is a paper that I presented at the ASOR Recent Advances in Operations Research (PDF File)mini-conference (December 1, 2006, Melbourne, Australia).

Recent Lectures, Seminars, Presentations

If your organization is promoting Info-Gap, I suggest that you invite me for a seminar at your place. I promise to deliver a lively, informative, entertaining and convincing presentation explaining why it is not a good idea to use — let alone promote — Info-Gap as a decision-making tool.

Here is a list of relevant lectures/seminars on this topic that I gave in the last two years.

ASOR Recent Advances, 2011, Melbourne, Australia, November 16 2011. Presentation: The Power of the (peer-reviewed) Word. (PDF file).

- Alex Rubinov Memorial Lecture The Art, Science, and Joy of (mathematical) Decision-Making, November 7, 2011, The University of Ballarat. (PDF file).

- Black Swans, Modern Nostradamuses, Voodoo Decision Theories, and the Science of Decision-Making in the Face of Severe Uncertainty (PDF File)

.

(Invited tutorial, ALIO/INFORMS Conference, Buenos Aires, Argentina, July 6-9, 2010).

- A Critique of Info-Gap Decision theory: From Voodoo Decision-Making to Voodoo Economics(PDF File)

.

(Recent Advances in OR, RMIT, Melbourne, Australia, November 25, 2009)

- Robust decision-making in the face of severe uncertainty(PDF File)

.

(GRIPS, Tokyo, Japan, October 16, 2009)

- Decision-making in the face of severe uncertainty(PDF File)

.

(KORDS'09 Conference, Vilnius, Lithuania, September 30 -- OCtober 3, 2009)

- Modeling robustness against severe uncertainty (PDF File)

.

(SOR'09 Conference, Nova Gorica, Slovenia, September 23-25, 2009)

- How do you recognize a Voodoo decision theory?(PDF File)

.

(School of Mathematical and Geospatial Sciences, RMIT, June 26, 2009).

- Black Swans, Modern Nostradamuses, Voodoo Decision Theories, Info-Gaps, and the Science of Decision-Making in the Face of Severe Uncertainty (PDF File)

.

(Department of Econometrics and Business Statistics, Monash University, May 8, 2009).

- The Rise and Rise of Voodoo Decision Theory.

ASOR Recent Advances, Deakin University, November 26, 2008. This presentation was based on the pages on my website (voodoo.moshe-online.com).

- Responsible Decision-Making in the face of Severe Uncertainty (PDF File)

.

(Singapore Management University, Singapore, September 29, 2008)

- A Critique of Info-Gap's Robustness Model (PDF File)

.

(ESREL/SRA 2008 Conference, Valencia, Spain, September 22-25, 2008)

- Robust Decision-Making in the Face of Severe Uncertainty (PDF File)

.

(Technion, Haifa, Israel, September 15, 2008)

- The Art and Science of Robust Decision-Making (PDF File)

.

(AIRO 2008 Conference, Ischia, Italy, September 8-11, 2008 )

- The Fundamental Flaws in Info-Gap Decision Theory (PDF File)

.

(CSIRO, Canberra, July 9, 2008 )

- Responsible Decision-Making in the Face of Severe Uncertainty (PDF File)

.

(OR Conference, ADFA, Canberra, July 7-8, 2008 )

- Responsible Decision-Making in the Face of Severe Uncertainty (PDF File)

.

(University of Sydney Seminar, May 16, 2008 )

- Decision-Making Under Severe Uncertainty: An Australian, Operational Research Perspective (PDF File)

.

(ASOR National Conference, Melbourne, December 3-5, 2007 )

- A Critique of Info-Gap (PDF File)

.

(SRA 2007 Conference, Hobart, August 20, 2007)

- What exactly is wrong with Info-Gap? A Decision Theoretic Perspective (PDF File)

.

(MS Colloquium, University of Melbourne, August 1, 2007)

- A Formal Look at Info-Gap Theory (PDF File)

.

(ORSUM Seminar , University of Melbourne, May 21, 2007)

- The Art and Science of Decision-Making Under Severe Uncertainty (PDF File)

.

(ACERA seminar, University of Melbourne, May 4, 2007)

- What exactly is Info-Gap? An OR perspective. (PDF File)

ASOR Recent Advances in Operations Research mini-conference (December 1, 2006, Melbourne, Australia).